Choose the Right RAG Development Company for Real-World Scale

Choose a RAG development company with confidence. Learn how to assess vendors, avoid POC-only traps, and match partners to your enterprise RAG roadmap today.

Most “RAG development company” pitches look identical—until your pilot melts down in production, retrieval fails silently, and compliance teams panic. The real differences between vendors only show up under pressure, and by then you’ve already burned through budget and executive patience.

At the center of this is retrieval-augmented generation—an architectural pattern that lets large language models ground their answers in your internal knowledge instead of guessing. Done right, retrieval-augmented generation (RAG) is the backbone of any serious enterprise RAG implementation, powering everything from internal Q&A to customer support and decision support tools.

The challenge: dozens of companies now advertise RAG development services, but their capabilities range from “we plugged an LLM into a search API” to genuine expertise in retrieval architecture, governance, and production operations. The label “RAG vendor” actually hides three very different archetypes: specialist RAG firms, general AI dev shops, and platforms that bundle RAG features.

In this guide, we’ll unpack those archetypes, introduce a pragmatic RAG Vendor Capability Assessment framework, and show you how to match the right partner to your risk profile and roadmap. Along the way, we’ll also explain where Buzzi.ai fits—as a specialist RAG development company focused on architecture, evaluation, and getting from prototype to durable production, not just another “we also do RAG” shop.

What a RAG Development Company Actually Does (and Doesn’t)

Why RAG Exists: From Static Knowledge Bases to Dynamic Retrieval

To understand what a real RAG development company does, you have to start with why retrieval-augmented generation exists at all. Out of the box, LLMs are pattern-matching machines: powerful, but prone to hallucinations when asked about facts that weren’t in their training data or are too recent or specific.

Enterprises live and die on precisely that kind of information: internal policies, customer tickets, contracts, technical docs, and logs. You don’t just need plausible answers; you need answers grounded in your own knowledge base and current reality. That’s exactly what an enterprise RAG implementation is designed to deliver.

Traditional FAQ bots and keyword search fail here. Ask a legacy FAQ bot: “Does our parental leave policy apply to contractors based in Germany?” It will probably surface a generic article about leave or miss entirely. A RAG system, by contrast, performs semantic search implementation over your latest HR policy PDFs, extracts the relevant sections, and injects them into the prompt so the LLM can generate a specific, grounded answer—plus links back to source documents.

Crucially, RAG is not a single product you buy. It’s an architectural pattern composed of several layers: knowledge base ingestion (bringing in data from source systems), indexing (often via vector databases), retrieval (selecting and ranking relevant chunks), and generation (the LLM itself). A RAG development company designs and operates that whole pipeline so your applications stay accurate as your data evolves.

How a RAG Development Company Differs from a General AI Agency

This is where many buyers get tripped up. A general AI agency or chatbot vendor might be excellent at UX, integrations, and basic LLM app wiring—but treat retrieval as a black box. A specialist RAG development company, on the other hand, is obsessed with the retrieval layer.

Specialists focus on retrieval architecture, vector database integration, document chunking strategy, ranking algorithms, and retrieval quality metrics. They know how to tune indexes, choose between hybrid retrieval (keyword + vector) and pure dense retrieval, and instrument the entire system with observability so you can see when and why retrieval fails.

Imagine two vendors with nearly identical slide decks. Vendor A, a general AI systems integrator, proposes “search over your docs + GPT-4” and a pretty front-end. Vendor B, a true RAG development company, walks you through retrieval evaluation datasets, latency profiles for different vector DBs, access-control-aware search, and how they debug bad results in production. On paper the solutions look similar; in real-world edge cases—multilingual corpora, noisy PDFs, rapidly changing data—it’s Vendor B that keeps your system from buckling.

When You Actually Don’t Need a Specialist RAG Partner

It’s also important to be honest: not every team needs the best RAG development company for enterprise knowledge bases. If you’re experimenting with a small, homogeneous knowledge base, low traffic, and minimal compliance exposure, you may be fine with an AI platform with RAG features baked in.

Platform-native RAG is usually sufficient when your data is limited (say, a few hundred internal FAQs), mostly structured, and the impact of a wrong answer is low. Think of an internal helpdesk bot for IT tickets or a lightweight engineering FAQ. In these cases, the overhead of engaging a dedicated RAG consulting firm may not be justified.

However, once complexity, traffic, or regulatory exposure increase, the calculus changes quickly. A regulated financial advice assistant, a clinical decision support helper, or a multi-region HR policy bot is no longer a toy. Here, relying purely on platform defaults becomes a liability, and bringing in specialist RAG development services to design a more robust architecture is often the difference between a flashy POC and a resilient system.

The Three RAG Vendor Archetypes and How They Compare

When you start searching for a RAG development company, you’ll encounter three broad archetypes: specialist RAG firms, general AI dev shops and systems integrators, and AI platforms with RAG features. Each can be the right choice in the right context—but they solve fundamentally different problems.

Specialist RAG Development Firms

Specialist firms are built around retrieval-augmented generation as a core competency. Their teams include experts in retrieval algorithms, production RAG pipeline design, evaluation frameworks, and domain-specific RAG patterns. They live and breathe questions like: How do we keep retrieval quality high as the knowledge base doubles? How do we measure hallucination risk?

These firms typically offer capabilities such as hybrid retrieval (keyword + vector), advanced document chunking, custom rerankers, guardrails for generative AI, and fine-grained observability across the whole pipeline. They are often the best RAG development company for enterprise knowledge bases that are messy, multi-lingual, and cross multiple business units or tenants.

Consider a hypothetical specialist working with a global SaaS vendor to build multilingual support across 15 languages with strict SLAs. The project requires domain-specific RAG, region-specific compliance rules, and 24/7 monitoring of answer quality. A specialist can design the retrieval layer, evaluation harness, and governance workflows to make that sustainable—this is where Buzzi.ai operates, providing RAG consulting and implementation services for enterprises that have outgrown prototypes.

General AI Dev Shops and Systems Integrators

General AI development shops and systems integrators offer a wide menu: chatbots, analytics, custom apps, and now RAG. Their superpower is orchestration—stitching together existing systems, handling enterprise security requirements, and managing large multi-team projects.

When your primary need is integration—tying RAG into CRM, ticketing, data warehouses, and legacy systems—these vendors can be compelling. IT often prefers them because of existing relationships and procurement frameworks. Many even position themselves as top RAG vendors for production-ready LLM applications, leveraging their broader track record.

The risk is that RAG architecture becomes an afterthought. Without deep expertise in retrieval, they may lean entirely on platform defaults, skip retrieval evaluation, and lack the tools to debug retrieval drift or hallucinations once real users push the system. The result: a great-looking demo that slowly degrades in production as new documents, languages, and use cases pile in.

AI Platforms with RAG Features

The third archetype is the AI platform with RAG features baked in: cloud providers, LLM platforms, or vector DB vendors offering “RAG in a box.” These solutions excel at speed to pilot. In a few hours, you can plug in a data source, configure basic vector database integration, and deploy a working chatbot.

The strengths are real: managed infrastructure, lower upfront costs, strong security baselines, and tight integration with your existing cloud stack. For teams trying to validate whether RAG is even useful, these platforms are often the right starting point.

But there are trade-offs in any RAG platform vs custom RAG development company comparison. Platform architectures are opinionated; customization of ingestion, retrieval, and evaluation can be limited. Governance responsibilities (Who owns evaluation? Who approves new data sources?) can be blurry. And vendor lock-in is a real concern when your core knowledge fabric and indexes are tied to a single provider’s stack.

Capabilities That Matter for Enterprise-Grade RAG Implementations

Regardless of which archetype you choose, there are a handful of capabilities that determine whether your RAG deployment will withstand real-world load and risk. This is where you separate marketing from reality in any RAG vendor evaluation.

Retrieval and Vector Database Architecture

Most of the perceived “intelligence” of a RAG system comes not from the LLM, but from retrieval. Index design, vector database integration, and retrieval strategies collectively determine what context the model sees—and therefore what it can reliably answer.

A serious RAG development company with vector database and retrieval expertise will have clear opinions on when to use hybrid retrieval (keyword + vector), how to apply metadata filtering, and how to enforce access control at query time. They will also be able to explain the latency/quality trade-offs across different vector DBs and deployment architectures in a production RAG pipeline.

Contrast two retrieval architectures. The naive one dumps all documents into a single index with default settings, no metadata, and no access control. It works in the lab but falls over when traffic spikes or security rules evolve. The robust one segments indexes by domain, enriches them with metadata, applies hybrid search, and is monitored via retrieval quality metrics. Under load, only the latter continues to deliver consistent, safe answers.

Data Ingestion, Chunking, and Governance

The ingestion layer is where theory meets messy reality. A strong vendor will invest heavily in connectors, document parsing, normalization, and continuous updates from source systems so your knowledge base remains fresh. This is where knowledge base ingestion stops being a one-time import and becomes an ongoing process.

Within ingestion, document chunking strategy is one of the most underrated levers in RAG architecture. Chunk too large and retrieval pulls in irrelevant material; chunk too small and context gets fragmented, forcing the LLM to stitch together partial answers and increasing hallucination risk. Good vendors use semantic or structural cues, overlapping windows, and domain knowledge to find the right balance.

Governance is the third pillar: handling PII, mapping access controls from source systems, enforcing data residency, and ensuring auditability. For regulated industries, this is non-negotiable. It’s why enterprise teams investigating a governed knowledge fabric often explore resources like the Ultimate Enterprise RAG Solution for a Governed Knowledge Fabric and then look for partners who can implement those principles in practice.

Evaluation, Observability, and Hallucination Mitigation

In a proof of concept, it’s tempting to eyeball a few answers and declare success. For real deployments, you need systematic RAG performance benchmarking and observability long before go-live. This is where mature RAG architecture practices differ from one-off demos.

A capable vendor will propose offline evaluation sets, human-in-the-loop review workflows, and live feedback loops in production. They will instrument dashboards tracking retrieval hit rates, coverage across document types, latency distributions, and hallucination incidents—turning amorphous “model quality” into measurable retrieval quality metrics.

Practical hallucination mitigation goes beyond clever prompts. It includes answer classification (e.g., “I don’t know” detection), filtering for ungrounded content, fallback behaviors to search or human escalation, and clear UX indicators of confidence and source citations. Academic work such as the original RAG paper (Lewis et al., 2020) and industry guides like Pinecone’s overview of retrieval-augmented generation (Pinecone Learn) provide useful baselines—but your vendor should be able to show how they translated these ideas into production systems.

Risk, Compliance, and Regulated-Industry Adaptations

For finance, healthcare, legal, and public sector teams, the bar is higher. Here, selecting a RAG development company is less about demos and more about risk posture. You should expect explicit conversation about data lineage, role-based access, redaction strategies, and governance workflows.

Best-practice frameworks like the NIST AI Risk Management Framework provide useful guidance for aligning RAG initiatives with enterprise risk management. A qualified vendor should be comfortable mapping their practices to such frameworks and explaining how their RAG consulting and implementation services for enterprises address each dimension.

A low-risk internal Q&A bot for marketing collateral might get by with lightweight review and logging. A high-risk clinical or financial assistant, by contrast, needs explicit sign-offs from legal and compliance, robust audit trails, and strict control over which data sources can ever be surfaced. Not every RAG development company is equipped for this; you want one with prior work in regulated environments and clear governance playbooks as part of your broader enterprise AI roadmap.

In enterprise RAG, retrieval, ingestion, evaluation, and governance matter more than which LLM you picked. Your vendor choice should reflect that.

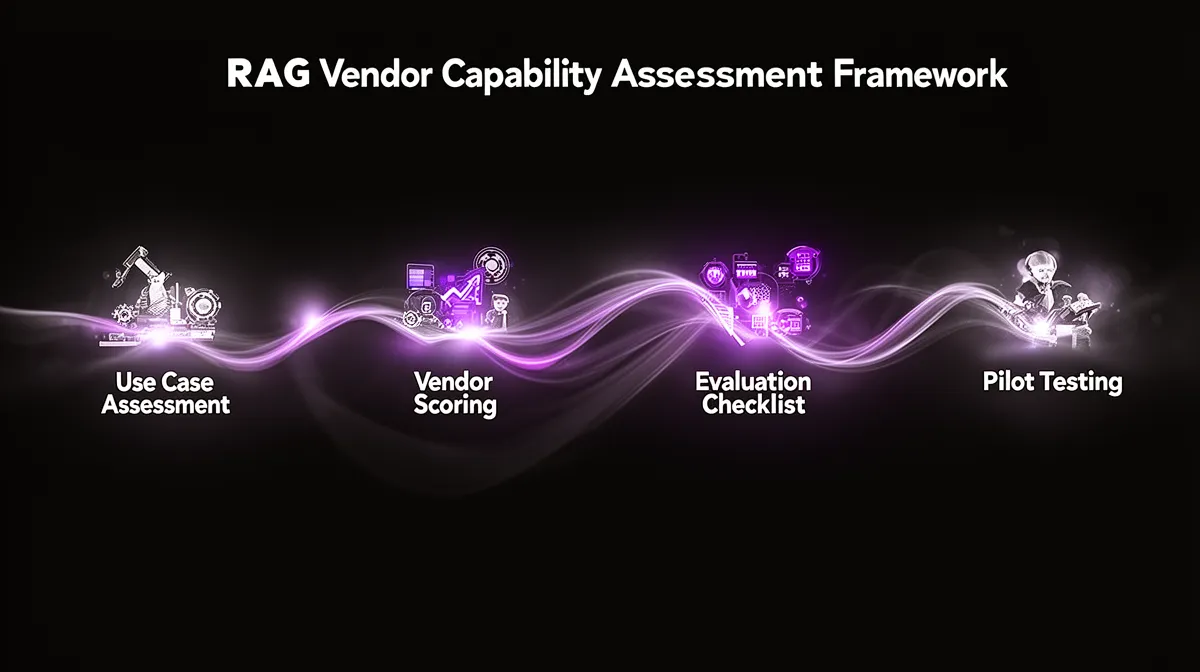

The RAG Vendor Capability Assessment Framework

Knowing what matters in a RAG system is step one. Step two is building a repeatable way to evaluate vendors. That’s the point of a RAG Vendor Capability Assessment framework: turning a chaotic vendor landscape into a structured decision.

Step 1: Clarify Your RAG Use Case Patterns and Risk Profile

Before you compare vendors, you need clarity on what you’re actually building. Is this internal Q&A, external support, expert decision support, or workflow automation? Is the primary goal cost reduction, improved accuracy, or new revenue?

Then assess complexity along a few dimensions: size and volatility of the knowledge base, reasoning depth, multi-lingual requirements, personalization, and regulatory exposure. A small, static internal FAQ in one language is nothing like a multilingual, personalized advisor in a regulated domain.

This self-assessment tells you whether you truly need a specialist RAG development company or can rely on a generalist partner or platform. For example, high reasoning depth plus high regulatory exposure is almost always a case for a specialist—especially if you’re thinking about how to choose a RAG development partner for complex retrieval use cases that might become core to your business.

Step 2: Score Vendors Across Five Capability Dimensions

Once you understand your needs, you can start scoring vendors rather than just collecting logos. A pragmatic approach is to rate each candidate from 1–5 across five capability dimensions:

- Retrieval architecture and vector DB expertise

- Data ingestion and governance

- Evaluation, observability, and reliability

- Domain expertise and solution patterns

- Delivery, operations, and support

This turns vague impressions into a structured RAG vendor evaluation checklist for enterprise AI teams. It also lets you compare top RAG vendors for production-ready LLM applications on something more meaningful than “who had the slickest demo.” You can even weight dimensions based on risk: in regulated use cases, governance and evaluation may matter more than UI polish.

In practice, you might find that Vendor A scores highest on integration and delivery, Vendor B leads in retrieval and evaluation, and Vendor C is strong on domain expertise. The framework helps you decide which trade-offs make sense, instead of defaulting to the biggest brand name.

Step 3: Use a RAG Vendor Evaluation Checklist in Diligence

With your scoring model in place, diligence becomes much more targeted. Rather than asking, “Can you do RAG?”, you ask questions that expose depth of experience and alignment with your enterprise AI roadmap.

Your RAG vendor evaluation checklist for enterprise AI teams might include questions like:

- Describe a past RAG project similar to ours. What went wrong and how did you fix it?

- How do you measure retrieval quality? Show us sample RAG evaluation framework dashboards or reports.

- Which vector databases have you used in production, and how do you choose between them?

- How do you handle guardrails for generative AI, including hallucination mitigation and escalation?

- What does your on-call and incident response process look like for RAG systems in production?

Ask for concrete artifacts: architecture diagrams, sample evaluation reports, and, where possible, access to a sandbox or anonymized pilot. Vendors that do real RAG development services will be eager to show this; vendors selling slideware will struggle.

Step 4: Validate with POCs and Pilots Before You Commit

Finally, use pilots to validate, not to entertain. Too many organizations run POCs that test novelty rather than durability. A better approach is to design a 4–6 week pilot that specifically measures retrieval quality, latency, governance, and path-to-production readiness.

Define clear success criteria upfront: grounded answer rates on a labeled test set, acceptable latency under peak load, adherence to governance constraints, and operational readiness (monitoring, alerts, runbooks). Run multiple vendors against the same datasets and evaluation harness so you get apples-to-apples RAG performance benchmarking.

The goal is to avoid being trapped by POC-only vendors who have no plan for hardening, scaling, and operating your system. You want partners who treat RAG prototyping to production as a continuum—offering RAG consulting and implementation services for enterprises that carry through from assessment to live operations.

A good RAG pilot doesn’t just prove that something is possible; it proves that it’s operable.

Mapping Your RAG Requirements to the Right Vendor (and Where Buzzi.ai Fits)

By now, it should be clear that “RAG vendor” is not a single category. The right choice depends on your use case, risk profile, and ambitions. The question is how to apply that insight to your own roadmap.

Match Common Enterprise Scenarios to Vendor Archetypes

Consider a few representative scenarios. A small internal IT FAQ bot serving a single region, with no sensitive data, is a great fit for an AI platform with RAG features; speed and simplicity matter more than ultimate flexibility. A global support assistant inside a multi-tenant SaaS product, serving thousands of customers, likely demands a specialist RAG development company with vector database and retrieval expertise.

For a regulated wealth-management advisor that surfaces portfolio insights and policy explanations, you almost certainly want a specialist RAG consulting firm that can design robust governance and evaluation. Meanwhile, a marketing content assistant that occasionally references your brand guidelines might be fine with a general AI dev shop or system integrator.

The goal is not prestige but fit. The “best RAG development company for enterprise knowledge bases” is the one whose strengths line up with your complexity, risk, and timeline—not the one with the flashiest conference booth.

Pricing, Ownership, and Long-Term Flexibility

Pricing and engagement models vary, but most fall into a few patterns: fixed-scope projects, ongoing retainers, outcome-based pilots, and platform subscription bundles. Platform-centric approaches often look cheaper at first because infrastructure and tooling are bundled into your cloud spend.

What matters more over 2–3 years is ownership. Who controls your RAG architecture, evaluation pipelines, and vector indexes? If everything is defined inside a single platform’s console, switching later can be painful. A custom implementation with a specialist partner may cost more upfront but give you clearer control over data flows, observability, and evolution of your enterprise AI roadmap.

In other words, there’s a real trade-off between convenience and flexibility in any RAG platform vs custom RAG development company comparison. For some use cases, fast and opinionated is ideal. For others—especially those core to your business—you may prefer a vendor who helps you design portable assets that can outlive any single platform contract.

Where Buzzi.ai Fits in the RAG Vendor Landscape

Buzzi.ai positions itself squarely as a specialist RAG consulting firm and development partner. Our focus is on complex retrieval, RAG architecture design, evaluation frameworks, and production-ready implementations—not one-off demos. We also bring experience with multimodal and AI voice agent front-ends, including WhatsApp-based AI Agent deployments in emerging markets.

We’re a strong fit for enterprises that have already proven value in a POC and now need to scale: consolidating fragmented knowledge bases, tightening governance, and building a durable enterprise RAG implementation. We’re not the right choice for ultra-simple bots that can live entirely inside a platform template, and we’ll tell you that upfront.

In one anonymized example, a customer came to us with a fragile, platform-built POC: retrieval was inconsistent, governance was ad hoc, and evaluation was manual. Over a focused engagement, we re-architected ingestion and retrieval, implemented a proper RAG evaluation harness, and hardened the system for production. The result was a governed, observable RAG stack that the customer’s own teams could operate and extend—backed by ongoing RAG development services from Buzzi.ai where they needed extra depth.

If you’d like to apply the framework from this article to your own roadmap, a natural next step is an AI discovery and RAG assessment workshop with Buzzi.ai. Together, we can stress-test your vendor shortlist and architecture options before you commit significant budget.

Conclusion: Turn RAG Vendor Chaos into a Competitive Advantage

The phrase “RAG development company” hides enormous variation in capability and focus. Some vendors are retrieval specialists; others are generalists or platforms with RAG as a checkbox feature. Those differences only surface under real-world load, edge cases, and governance pressure.

A structured RAG Vendor Capability Assessment framework—grounded in retrieval architecture, ingestion, evaluation, and governance—lets you align vendor selection to your complexity and risk profile. It moves you beyond slide decks and into measurable criteria you can defend to technical and non-technical stakeholders alike.

Platform-native RAG is often the right tool for low-risk experimentation and simple use cases. But as your RAG initiatives touch regulated workflows, critical decisions, or core products, the value of a specialist partner like Buzzi.ai grows: someone who can design, evaluate, and operate the system, not just demo it. Start by applying this framework to one high-priority initiative—and, if helpful, bring in a partner to sanity-check your assumptions before they become expensive commitments.

FAQ: RAG Development Companies and Enterprise Vendor Selection

What is a RAG development company, and how is it different from a general AI agency or chatbot vendor?

A RAG development company specializes in building systems based on retrieval-augmented generation, where LLMs are grounded in your internal knowledge via a structured retrieval pipeline. These vendors focus on ingestion, indexing, retrieval, and evaluation as first-class problems. General AI agencies or chatbot vendors may build front-ends and plug into basic search, but often treat retrieval as a black box or platform default rather than a core competency.

When does my enterprise actually need a specialist RAG development company instead of relying on platform-native RAG features?

You typically need a specialist once your use case involves large or fast-changing knowledge bases, multiple languages, sensitive or regulated data, or mission-critical workflows. Platform-native RAG is fine for small internal FAQs, experiments, and low-risk tools with limited scope. As soon as inaccurate answers could trigger financial, legal, or safety consequences, a specialist partner with deep retrieval and governance experience becomes much more important.

What are the key capabilities to evaluate when shortlisting RAG vendors for complex, high-stakes use cases?

Focus on four areas: retrieval and vector database architecture, data ingestion and governance, evaluation and observability, and experience in your domain. Ask vendors to show how they design indexes, handle access control, and measure retrieval quality over time. For high-stakes use cases, it’s critical that they can demonstrate repeatable processes for hallucination mitigation, incident response, and alignment with your existing risk and compliance frameworks.

How can we practically measure retrieval quality and hallucination risk during a RAG pilot or proof of concept?

Start by creating a labeled test set of representative questions, expected answers, and source documents. During the pilot, log which documents were retrieved, what answers were generated, and how they compare to your ground truth. Then compute metrics such as grounded answer rate, coverage of key document types, and hallucination rate, and review a sample manually each week to ensure your RAG performance benchmarking reflects real business expectations.

What should a robust RAG vendor evaluation checklist include for enterprise AI and ML leaders?

A good checklist covers technical, operational, and governance dimensions. On the technical side, it should probe retrieval strategies, document chunking strategy, and use of retrieval quality metrics. Operational questions should address monitoring, on-call, and incident handling, while governance items should explore data lineage, access control mapping, and compliance workflows—especially if you work in a regulated industry.

How do platform-based RAG solutions compare to custom RAG implementations in terms of cost, ownership, and flexibility?

Platform-based solutions usually win on short-term cost and speed: you pay via subscription or cloud spend and get a working system quickly. However, most of the architecture and evaluation logic lives inside the platform, which can make deep customization and long-term portability harder. Custom implementations with a specialist RAG development company may cost more upfront but give you clearer ownership of indexes, evaluation frameworks, and architecture decisions, reducing lock-in over time.

What special considerations apply when choosing a RAG development company for regulated industries like finance or healthcare?

In regulated domains, you need vendors who can speak fluently about data residency, auditability, and alignment with frameworks such as the NIST AI RMF. Ask for examples of how they have handled PII, role-based access, redaction, and human-in-the-loop review in prior projects. It’s also wise to involve legal and compliance partners early, so vendor capabilities and your internal risk policies are aligned before any system goes live.

How can we design a fair POC process to compare multiple RAG vendors using the same data and success metrics?

Define a shared dataset, test questions, and success criteria before engaging vendors, and insist that each candidate uses the same inputs. Measure groundedness, coverage, latency, and governance compliance across all pilots, and run them for a similar duration (for example, 4–6 weeks). This levels the playing field and reveals which vendors can truly take you from POC to production, not just win a demo bake-off.

What engagement and pricing models do RAG development companies typically use, and how do they affect long-term vendor lock-in?

Common models include fixed-scope projects, time-and-materials retainers, outcome-based pilots, and bundled platform subscriptions. Fixed projects and outcome-based pilots often focus on getting a first system live, while retainers support continuous improvement. To manage lock-in, pay attention to who owns the architecture diagrams, evaluation pipelines, and vector indexes after the engagement—well-structured contracts should leave you with portable assets, not just a monthly invoice.

Where does Buzzi.ai fit among RAG vendors, and what kinds of enterprise RAG projects is it best suited for?

Buzzi.ai is best suited to enterprises running complex, high-stakes RAG initiatives that require strong retrieval, governance, and production-readiness. We specialize in designing and operating robust RAG architectures, including voice and messaging front-ends, rather than just building simple bots. If you’re unsure how to proceed, starting with an AI discovery and RAG assessment workshop with Buzzi.ai is a practical way to clarify your roadmap and vendor strategy before scaling up.