Logistics AI Development Services for Multi-Party Reality

Most logistics AI fails because it assumes one company is in charge. That's fantasy. Real supply chains are messy, political, and split across carriers,...

Most logistics AI fails because it assumes one company is in charge. That's fantasy. Real supply chains are messy, political, and split across carriers, brokers, warehouses, shippers, and customers who don't share incentives or clean data.

That's why multi-party logistics AI development matters more than another demand forecast model or route optimizer. According to a 2026 Trax Technologies report, 70% of AI projects fail because of data quality issues, and poor data quality costs organizations $12.9 million a year. In this article, I'll show you what actually breaks in multi-company coordination, what architecture holds up, and where logistics AI development services usually oversell the easy part.

What Logistics AI Development Services Actually Mean

37%. That's the share of freight forwarders' apparel and fashion customers who said they expect AI-powered solutions, according to BCG in 2026. I don't read that as demand for another dashboard with prettier colors. I read it as impatience. People want the shipment to show up, the exception handled before it turns into a fire, and the plan to hold together after three different companies touch it.

I've seen how this goes. 6:12 a.m., warehouse team hits its labor target, everyone feels good, the carrier still misses pickup, the TMS chooses the cheapest route, and two days later the retailer is eating a stockout. Nobody thinks they screwed up because each team can point to its own metric and say, "We did our part." That's exactly the problem.

A lot of people hear multi-party logistics AI development and think of a tighter forecast, a cleaner ETA model, maybe a routing engine that trims 4% off miles driven. Fine. Useful. Still too narrow. I think that's how companies end up making one corner of the operation look smarter while the wider network gets dumber.

Logistics AI development services should build decision systems, not isolated models. The real work is helping a network plan shipments, reroute around disruptions, handle exceptions, and coordinate actions across carriers, warehouses, brokers, suppliers, and customers.

The ugly truth sits right in the middle of this: a single company can optimize its own dock schedule and still make the broader supply chain worse. Your warehouse can look efficient on paper while your carrier wastes 47 minutes at the gate. Your transport system can save money on lane selection while your customer pays with empty shelves. Local win. Network loss.

Logistics AI development services have to include inter-organizational AI coordination, not just internal automation. That's what those customer expectations are really pointing at: better service across company boundaries, real-time supply chain visibility, and decisions that still make sense once plans collide in the real world.

70%. That's how many AI projects fail because of data quality issues, according to Trax Technologies in 2026. That number doesn't surprise me one bit. B2B logistics AI integration usually breaks in boring places — missing status events, bad master data, ugly handoffs between systems, shaky rules about what one company can share with another.

Start there. Not with the model.

If you're buying or building multi-party supply chain AI, map the coordination problem first. Who makes which decision? What event changes that decision? What can each party share safely? That's where privacy-preserving machine learning matters. Same deal with federated learning for logistics. The point isn't magic. It's getting multiple companies to act on compatible information without exposing more than they should.

That's the actual logistics coordination architecture. Not one giant brain floating above everything and issuing commands. A working set of agreements about who acts next, which signal matters, and how AI helps every party see the same mess clearly enough to move.

If you want this stuff to survive detention fees, chargebacks, and a missed retail delivery window at 4:30 p.m., don't buy the flashiest demo in the room. Define the shared decisions first. Fix the handoffs. Build around coordination across organizations. You can see that idea pushed further in our coordination-first multi-agent system development guide. Are you solving for one team's metric, or for what actually happens after the handoff?

Why Single-Organization Logistics AI Fails in Practice

Everybody says the same thing about logistics AI: get better predictions, put a dashboard on top, connect the feeds, and the network gets smarter. Sounds clean. Sounds modern. Sounds like the vendor demo you saw in 2025 with the glowing control tower and the neat little ETAs.

It's also incomplete.

I watched one rollout fall apart over something embarrassingly small: timestamps. Not strategy. Not model accuracy. Timestamps. A team had the polished model, the command-center view, and all the usual confidence. Then live carrier and warehouse-partner data hit production. EDI messages showed up late. Arrival times came through in different time zones. Exception codes were blank or missing altogether. Three days later, people had already stopped trusting the recommendations.

A missed pickup doesn't stay a missed pickup for long. It turns into a dock queue. Then detention. Then chargebacks. Then that familiar meeting where the shipper, carrier, and warehouse each insist the problem started somewhere else. I've seen versions of that meeting run 47 minutes and end with nothing except a bigger email chain.

People hear "poor data quality" and think spreadsheets with ugly headers. Logistics people know better. They know it looks like freight not moving. Trax Technologies put a number on it in a 2026 report: $12.9 million per year as the cost of poor data quality for organizations. That's not abstract cleanup work. That's money leaking out through bad handoffs, stale events, and decisions made on half-true information.

The part most vendors skip is the part that actually matters: single-company AI fails because logistics doesn't belong to a single company.

A shipper wants transportation cost down. A carrier wants higher asset utilization and fewer empty miles. A warehouse wants arrivals it can plan labor around instead of getting slammed at 4:30 p.m. by twelve trucks that were all "on time" until they weren't. A broker wants coverage and margin. The customer wants speed and certainty at the same time, which is convenient for them and terrible for everyone else.

Put one model in the middle of all that and pretend those incentives line up on their own? Bad idea. You'll just produce conflict faster.

MIT Sloan Management Review is right that artificial intelligence is creating new opportunities for logistics and supply chain management. I'd argue people stretch that point way past what it can support. Opportunity isn't architecture. If your system treats a multi-company workflow like an internal planning problem, it'll crack the minute a partner withholds data, ignores a recommendation, or protects its own SLA instead of yours.

That's why glossy demos feel so convincing and so fake at the same time. One dataset. One control tower. One decision-maker. Real freight has none of those luxuries.

After you've seen this enough times, you stop asking whether the model is impressive and start asking uglier questions earlier.

- Who owns the event data? If your design assumes clean status events across every partner, you're already behind. What usually shows up is stale EDI, inconsistent timestamps, missing milestones, and exceptions that never enter the record at all. That's how real-time supply chain visibility disappears without anybody admitting it disappeared.

- Who can say no? Centralized decision logic sounds great right up until the AI recommends rerouting freight or reprioritizing inventory as if every participant has to comply. They don't. Carriers have operating rules. Warehouses have appointment limits. Brokers have contractual obligations. Every company has its own KPIs, and none of them vanish because your model found a clever answer.

- Who eats the cost? This one gets buried under nice language about optimization. A warehouse scheduling model can improve dock throughput while pushing two extra hours of detention onto carriers sitting outside the gate. That's not network optimization. That's cost shifting dressed up with math.

- What can be shared safely? Everybody loves inter-company data sharing until legal gets involved and starts asking about rates, margins, customer lists, or odd operational edge cases another party shouldn't see. Fair question. Without privacy-preserving machine learning or federated learning for logistics, plenty of designs die in legal review before they ever get near production.

This is why so many logistics AI development services overpromise what they're actually selling. They sell prediction where you need coordination. They pitch internal automation where you need B2B logistics AI integration. They call it transformation even though it's really an isolated model waiting to be ignored by the next outside partner in the chain.

No, the lesson isn't that AI doesn't work in logistics. That's lazy thinking.

The lesson is that multi-party logistics AI development has to begin with divided authority, not average forecast accuracy.

The first question isn't "How accurate is the model?" It's "Who can act on this recommendation, under what constraints, using what shared facts?"

Inter-organizational AI coordination matters more than squeezing out another point of forecast accuracy if nobody across the chain can execute together anyway. Build a logistics coordination architecture around event ownership, permissioned data exchange, exception paths, and negotiated decisions between partners. If you're serious about multi-party supply chain AI, start with handoffs and disputes first. Prediction comes later.

If you want a practical next step, study systems built for messy exceptions instead of perfect plans: logistics AI solutions that handle exceptions. Because really—what's your model supposed to do when two partners disagree on what just happened?

Multi-Party Logistics Patterns That AI Must Support

At 2:10 p.m. in Savannah, everything looked fine. The container flipped to available, the carrier saw green, somebody upstream marked their piece complete, and for about three minutes the dashboard told a very comforting lie. Then the calls started. No labor assigned at the warehouse. No open yard slot. An appointment window that existed in the TMS but not in real life. By 2:37, people weren't talking about visibility anymore. They were arguing about detention.

That's the part I think gets flattened in a lot of logistics AI talk. Oracle and plenty of other vendors love the familiar list: predict demand, plan shipments, monitor cargo conditions, tighten warehouse utilization, cut route time. Sure. That's real work. Trucks moving still matters.

But freight usually doesn't get expensive because a truck moved. It gets expensive in the awkward gap between "we're done" and "you're actually ready." That's where multi-party logistics AI development either earns its keep or turns into another nice-looking model with no stomach for operations.

A single-company system can ask a clean question: what's my best next move? A network with brokers, carriers, 3PLs, cross-docks, yards, ports, and customer facilities has to ask something uglier: what's the least bad coordinated move for everybody at once? That's not semantics. That's different math, different incentives, different failure modes.

Handoffs break faster than linehaul

The problem usually isn't missing status. It's fake readiness. One party closes a task, the next party isn't truly ready, and the software acts like those are the same thing.

The Savannah drayage example is exactly why inter-organizational AI coordination can't treat milestone events as truth just because codes line up neatly on a screen. It has to compare readiness signals across companies, score confidence instead of assuming certainty, and catch mismatches before they turn into missed retail delivery windows or fee disputes nobody wants to own.

I’d argue most teams stop too early here. They build event ingestion and call it orchestration. That's not orchestration. That's transcription with better branding.

Cross-docks will punish selfish optimization every single time

I watched this in a building pushing roughly 180 trailers a day. Local metrics looked great. Fast unloads. Nice dock numbers. Everybody could point to a chart and say they were efficient. The network still got slower.

If each node optimizes its own throughput, the shared system can degrade. A cross-dock wants to unload the easiest trailer first because it's quick and makes productivity look sharp. The downstream carrier is staring at a 6 p.m. departure cutoff. The shipper cares about high-priority SKUs that can't miss store replenishment. All three are rational. All three can conflict within the same hour.

Real multi-party optimization inside a logistics coordination architecture has to hold those competing priorities at once instead of letting one company post a local win that creates two downstream losses. So no, this isn't just warehouse sequencing with shinier labels. Serious logistics AI development services need shared priority scoring rules that multiple parties can trust enough to act on.

An appointment isn't truth; it's negotiation frozen in time

An appointment is a negotiated guess. Treat it like a hard fact and your model's going to look smart right up until operations laughs at it.

Say weather hits Atlanta and pushes linehaul ETA by 90 minutes. The consignee stops taking freight after 4 p.m. The broker can rebook for tomorrow morning. The warehouse can hold labor one extra hour, but now there's added cost and somebody has to approve it fast enough for the decision to matter. Basic ETA prediction helps, obviously. B2B logistics AI integration is what decides which tradeoff wins, who signs off on it, and how quickly that decision spreads across every party affected.

You also need safe inter-organization data sharing. Some networks can use federated learning for logistics or privacy-preserving machine learning so partners improve delay prediction together without exposing rates, margins, or customer-level details they don't want drifting around the ecosystem.

If you're building multi-party supply chain AI, start where accountability changes hands and incentives collide: handoff validation, cross-dock sequencing, appointment negotiation, exception escalation, and shared ETA management.

The funny part is the best systems here rarely look flashy. They look more like adult supervision with receipts than magic automation. Not sexy. Much better under pressure. And if your model can't survive contact with real freight, what exactly did you build?

Inter-Organizational Design for Logistics AI Development

Why do so many shared logistics AI projects feel exciting in the kickoff and radioactive by the third week?

You know the setup. Somebody rolls in talking about better forecasts, tighter optimization, bigger benchmarks, smarter models. The room nods along because that story is easy to sell. Model first. Accuracy first. Architecture first.

Then somebody asks for the data. Not a sample. Not a tiny event feed. The real stuff. Raw operational records from multiple companies pushed into one place so the machine can get "smart." I've watched that exact request turn a cheerful meeting into a hostage situation before lunch.

Legal starts marking up terms like they're defusing a bomb. Partners get cagey about exposing customer lists, margin structure, service failures. Ops people ask the questions everybody else was trying to skip: who sees what, who gets paid if this works, and who owns the mess when the model makes a bad call at 4:40 on a Friday?

That's the answer. Data volume isn't some side issue. ScienceDirect points to it pretty clearly: modern AI in supply chain management needs huge training datasets, and that requirement becomes an implementation problem fast. I think that's the flare gun. The project wasn't blocked by math. It was designed backward.

The piece most teams miss is governance inside the workflow. Before model selection. Before vendor beauty contests. Before anyone gets hypnotized by benchmark scores and glossy system diagrams. If a vendor shows me architecture slides before anyone has mapped decision rights, I'm not impressed. I'm suspicious.

Map stakeholders by decision, not by org chart

Org charts are neat. Logistics isn't.

You need a conflict map, not an ecosystem slide. Start where work actually happens: appointment acceptance, reroute approval, inventory substitution, detention dispute, exception escalation. Then get painfully specific about who can recommend, who can approve, who can veto, and who eats the cost when it goes sideways.

A shipper can ask for priority unload. The warehouse decides labor allocation. The carrier controls arrival timing. The retailer sets delivery windows. That's the actual inter-organizational AI coordination problem under all the dashboard chatter.

Align incentives before you optimize anything

A lot of "multi-party optimization" is just local optimization wearing nicer clothes.

One company cuts its cost by dumping delay or risk onto somebody else, everyone claps because one KPI moved in the right direction, and six weeks later trust is gone. I've seen this happen over something as small as unload prioritization on a Tuesday morning load bank. Tiny decision. Same political damage.

You need shared outcome logic that says the tradeoffs out loud. High-value replenishment load? Fine. Rank decisions by lost-sales risk first, detention exposure second, route cost third. Now you're building a real logistics coordination architecture. Anything less is fake agreement hiding inside a metric.

Design around data-sharing limits up front

This is where teams usually get greedy.

Inter-organization data sharing should be permissioned and specific. Don't ask every partner for everything they've got. Ask for the smallest event set required to support action: milestone timestamps, capacity signals, appointment status, exception codes, confidence scores.

Federated learning for logistics and privacy-preserving machine learning make a lot more sense once you stop treating them like research toys. If carriers can help improve delay prediction without exposing customer lists or margin structure, participation gets easier fast. Less exposure usually means more trust.

Set decision rights for normal operations and failure modes

Your AI needs authority boundaries as much as it needs predictions.

Who can act automatically on low-risk exceptions? Who only gets alerted? Who has to approve schedule changes that touch contractual SLAs? Those aren't cleanup questions for later.

The best B2B logistics AI integration work I've seen separates recommendation rights from execution rights with almost annoying clarity. It matters even more in multi-party supply chain AI, where shared visibility doesn't mean shared control. Real-time supply chain visibility helps, sure. Visibility without authority design just gives everyone a sharper view of the same argument.

If you want a smart template for this thinking, read our coordination-first multi-agent system development piece.

What should you do next? Run four workshops before you choose models or vendors: stakeholder decisions, incentive conflicts, minimum viable data sharing, and approval rules by exception type. Sounds boring. I don't care. I've watched teams burn six figures skipping exactly that work. Boring architecture beats expensive confusion every time — so why do people still insist on starting with the demo?

Coordination Architecture: Building AI Across Boundaries

Everybody says the hard part of logistics AI is the model. Better predictions. Smarter agents. More automation. Sounds good on a conference stage. Then 6:17 a.m. hits on a Tuesday, the carrier app says the truck arrived, Manhattan WMS is still waiting for the receipt task, SAP shows the ASN as pending, MercuryGate relaxes because detention risk supposedly dropped, and the ops lead standing at the dock knows the whole picture is wrong.

That's the part people skip. I'd argue the prediction usually isn't what fails first. The real mess starts before that, in the layer nobody wants to talk about: who decides what actually happened, who owns the next move, and when a human has to step in before two companies make conflicting decisions off the same bad assumption.

That's logistics coordination architecture. Not theory. Survival. Especially for multi-party logistics AI development and messy inter-organizational AI coordination where one bad status can ripple across carriers, warehouses, brokers, and consignees in under ten minutes.

A single event can blow up an otherwise smart workflow. Arrival confirmed. Dock assigned. Customs hold issued. Temperature breach at 38°F on a reefer lane that was supposed to stay below 34°F. Missed appointment. Those aren't just updates for someone's dashboard. Each one should trigger rules, recommendations, or tasks across partners, not stay trapped inside one company's system.

You need an event-driven setup with shared state, explicit approvals, and hard connections into ERP, TMS, and WMS platforms. Twelve minutes of lag doesn't sound dramatic until an AI starts making confident decisions off stale data and now you've got labor scheduled for freight that isn't ready, or detention exposure hiding behind a status nobody trusts.

Events matter. Shared state matters more than most teams want to admit.

Not a giant pooled database, either. I've watched projects die right there, usually sometime after legal gets pulled in and everyone realizes nobody agrees on what can be exposed. What works is narrower: a permissioned record of operational facts multiple parties can trust — current milestone, confidence level, owner of next action, exception severity, pending approvals. That's how you get real-time supply chain visibility without reckless inter-organization data sharing.

AWS for Industries has argued that agentic AI can support a strong data strategy and make logistics run smarter. Fair enough. The missing piece is restraint. In multi-party supply chain AI, an agent can recommend rerouting freight or changing inventory priority. It shouldn't quietly rewrite another company's labor plan or trigger contract penalties because it acted like authority and advice were the same thing.

Humans belong where risk gets uneven. Not everywhere. Just where a decision can shift cost or liability across company lines.

- Auto-execute: reschedule an internal pick wave after a late inbound signal.

- Require approval: move delivery from same-day to next-day if the consignee contract is affected.

- Escalate jointly: reroute freight across carriers when margin, service level, and capacity all change at once.

This is where B2B logistics AI integration stops being slide-deck fluff and starts touching real systems: SAP or Oracle ERP, Blue Yonder or Manhattan WMS, MercuryGate or Oracle Transportation Management on the TMS side. Pull in APIs and event streams from those systems. Normalize them into one operational decision fabric built for multi-party optimization.

If partners don't want broad raw-data sharing, that's normal. Most do not. Use scoped exchange patterns now. Save federated learning for logistics and privacy-preserving machine learning for later, when prediction quality actually earns the added complexity instead of just sounding sophisticated in procurement meetings.

If you want dependable execution from logistics AI development services, start with events, state, approvals, and system connectors. Everything else is demo material. If you want to go deeper, read our coordination-first multi-agent system development guide — then ask the uncomfortable question: if one partner changes reality right now, who actually knows?

How Buzzi.ai Delivers Multi-Party Logistics AI Services

Everybody says the same thing about logistics AI: get better predictions, add more visibility, automate the alerts. Sounds nice. Sounds modern. And half the time it's dead wrong.

I watched that play fail last winter on a delayed shipment. The carrier saw the delay first. The warehouse knew dock space was gone. The broker had an angry customer demanding answers. The system fired off alerts like it was doing something useful, and for 47 minutes the problem just ricocheted around inboxes because nobody wanted to make the first move and own the cost.

That's why I don't buy the obsession with squeezing out one more point of model accuracy. A cleaner prediction doesn't fix a shared operation where carriers, warehouses, brokers, suppliers, and customers all hold different pieces of the truth and none of them want to expose more data than they have to.

The demand for a better approach isn't hypothetical anymore. BCG reported in 2026 that 37% of freight forwarders' apparel and fashion customers expect AI-powered solutions. Not pilot theater. Not another dashboard with green-yellow-red flags. They expect service that still works once decisions cross company boundaries.

Buzzi.ai is built around that exact mess. Its multi-party logistics AI development work isn't based on wiring every partner into one giant system and hoping trust magically appears. That's outdated thinking.

The missing piece is coordination design before automation. Who can approve a reroute? Who benefits if inventory gets reallocated? Who gets blamed if margin disappears? Where does data sharing stop because legal shut the door, or because two partners have been working together for six years and still don't trust each other with fill-rate numbers?

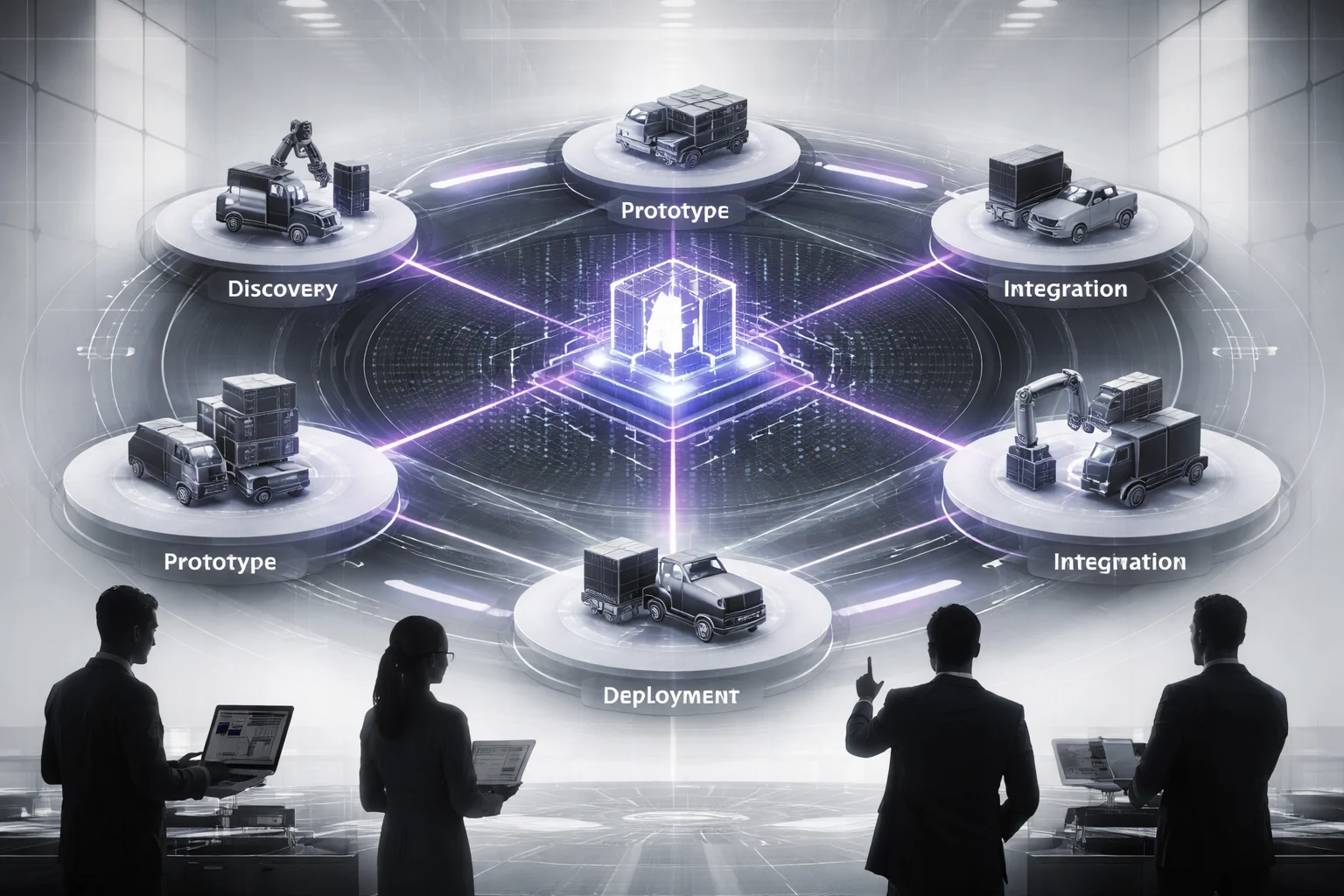

So the work starts with discovery. Not glamorous, but necessary. Decisions, incentives, data boundaries. That's the map.

Then Buzzi.ai narrows the scope hard and prototypes one workflow that actually hurts enough to matter. Exception triage is a strong candidate. Appointment coordination too. Ugly workflows are usually the right ones — high-value, easy to test, impossible to ignore once they improve.

Only after that do the systems get connected: ERP, TMS, WMS, partner APIs, event streams. Real integration work. The kind that makes real-time supply chain visibility mean something beyond a sales demo at Manifest or Gartner Supply Chain Symposium.

Most teams trip during deployment because they pretend risk is shared evenly across the network. It never is. A 3PL handling penalties doesn't carry the same downside as a brand eating a missed retail delivery window at Target or Zara. Buzzi.ai keeps human approvals where they belong, then layers optimization on top with multi-party optimization rules, inter-organization data sharing controls, and privacy-preserving options like federated learning for logistics when partners need tighter boundaries.

That's really the center of it: no single company gets to dictate reality in a shared network. Buzzi.ai's logistics AI development services are built for inter-organizational AI coordination, practical B2B logistics AI integration, and a working logistics coordination architecture for multi-party supply chain AI.

If you're trying to make this real, I'd argue you should ignore any AI pitch that only works inside your own four walls. Pick one shared workflow first. Decide who can see what. Keep approvals in place where the downside is real. Connect systems in stages instead of all at once. Prove that people across separate companies can act on the same signal without turning every exception into a five-person committee call.

If that's your actual problem, read this piece on coordination-first multi-agent system development. Because if your partners still can't coordinate, what exactly did you automate?

The bottom line

Multi-party logistics AI development works only when you treat AI as a coordination system across carriers, warehouses, brokers, suppliers, and customers, not as a pile of isolated models.

So audit your handoffs before you buy another prediction engine. Map the events, data rights, exception paths, and human decision points that cross company boundaries, because that's where delays, disputes, and margin leaks usually start. And watch your data quality like a hawk: according to a 2026 Trax Technologies report, 70% of AI projects fail because the data is bad long before the model is.

Build the coordination layer first, or your logistics AI will fail in production.

FAQ: Logistics AI Development Services for Multi-Party Reality

What are logistics AI development services?

They’re the services used to design, build, integrate, and run AI systems for freight, warehousing, transportation, and supply chain operations. In practice, that means demand forecasting, shipment planning, exception management automation, warehouse and yard scheduling, and transportation management optimization. Good providers don’t just ship models, they connect AI to your TMS, WMS, ERP, carrier feeds, and partner workflows.

How does multi-party logistics AI development work across organizations?

It works by coordinating decisions across shippers, carriers, 3PLs, warehouses, suppliers, and sometimes customers, instead of optimizing each company in isolation. The core idea is shared signals, local decision rights, and a common coordination layer for event-driven logistics. That’s what makes inter-organizational AI coordination useful in the real world, where no single company controls the whole network.

Why do logistics AI projects fail when they’re built for only one organization?

Because logistics breaks at the handoff points, not just inside your four walls. A model can look smart inside one ERP and still fail once carrier delays, supplier changes, dock congestion, or partner data gaps hit the process. According to a 2026 Trax Technologies report, 70% of AI projects fail due to data quality issues, and poor data quality costs organizations $12.9 million annually.

What coordination architecture is needed for AI across supply chain boundaries?

You need a logistics coordination architecture built around APIs, EDI, event streams, shared business events, and clear decision ownership. Most teams also need cross-enterprise workflow orchestration, a canonical event model, and rules for exception routing between partners. If you skip that layer and jump straight to models, your AI ends up making recommendations nobody can act on.

What data integration approach works best for B2B logistics AI integration?

The boring answer is the right one: use a mix of API-based integration for supply chain, EDI for legacy partner flows, and event streaming for real-time supply chain visibility. Different partners run on different clocks and different systems, so one integration method usually won’t cover the network. The best setups normalize shipment, inventory, order, and milestone data into a shared operational view without forcing every partner onto the same stack.

How do you handle privacy and security in inter-organizational logistics AI?

You don’t start by sharing raw data everywhere. Strong multi-party supply chain AI programs use role-based access, data minimization, contract-based AI governance, audit trails, and privacy-preserving machine learning where needed. In some cases, partners share features, scores, or alerts instead of full records, which cuts risk without killing coordination.

Does federated learning work for logistics optimization use cases?

Sometimes, yes, but it’s not magic. Federated learning for logistics makes sense when partners want to improve forecasting, ETA prediction, or anomaly detection without pooling all underlying data in one place. It’s less useful when the real problem is bad process design, inconsistent master data, or missing event coverage, which is still common according to ScienceDirect’s findings on training data hurdles.

How should you measure ROI for multi-party logistics AI development?

Measure network outcomes, not just model accuracy. Track on-time delivery, dwell time, tender acceptance, inventory turns, expedite spend, planner workload, exception resolution speed, and service-level compliance across partners. If your AI improves one company’s dashboard but makes the rest of the chain slower or more expensive, that’s not ROI, that’s cost shifting.