Lead Qualification Chatbot: Conversational Wins

Most lead forms are dead weight. They don't qualify intent, they don't catch urgency, and they sure don't tell your sales team who deserves a callback first....

Most lead forms are dead weight. They don't qualify intent, they don't catch urgency, and they sure don't tell your sales team who deserves a callback first.

That's why a lead qualification chatbot is beating the old form playbook in more pipelines than most teams want to admit. According to a 2026 Jotform Blog report, 74% of customers prefer chatbots for simple, quick questions, and over 60% of companies using chatbots now use them to qualify leads, according to Codertrove. In this article, I'll show you where conversational lead qualification actually wins, where it falls flat, and what your team should do before you let a bot near your pipeline.

What Is a Lead Qualification Chatbot?

Hottest take? A lead qualification chatbot is not a prettier contact form. I think calling it that is exactly why so many companies build bad ones.

The whole point is speed at the moment somebody actually wants something. Jotform Blog reported in 2026 that 51% of customers use chatbots for immediate answers. That word matters. Immediate means right now, while the buyer is still on the page, still comparing options, still deciding whether your tool fits their 40-person team or whether they should click back and try a competitor.

I watched this happen on a SaaS site with a totally respectable “contact sales” setup. Five fields. Clean layout. Polished copy. The kind of page nobody objects to in a review meeting. People still skipped the form, opened chat, and asked a blunt question: “Can this work for a 40-person team?” I've seen that exact behavior show up in session recordings in under 20 seconds. That's not random curiosity. That's buying intent.

That's what most people miss.

A lead qualification chatbot isn't there to hoard names and email addresses like it's 2018. It asks useful questions, figures out what the visitor is trying to do, and decides what happens next. Who's seriously evaluating? Who's just poking around? Who needs a human right now because waiting even an hour could cool the deal off?

A support bot answers FAQs. A rigid form marches through fixed fields in fixed order whether they make sense or not. A good AI sales chatbot behaves more like a sharp rep on a good day. It uses conversational AI, natural language processing (NLP), and intent detection to handle conversational lead qualification like an actual exchange instead of paperwork with chat bubbles.

The difference gets obvious fast. One page throws eight blanks at the visitor before answering anything. The bot asks one thing at a time: team size, budget range, timeline, use case, current tools. Wonderchat describes strong systems in pretty plain terms: collect qualification criteria, score the lead, trigger lead scoring automation, route qualified prospects to sales or straight into booking.

Tone makes or breaks it. People can smell an interrogation instantly. Ask one question at a time. Tell them why you're asking if it isn't obvious. Change direction based on what they say. I'd argue this matters more than clever wording. I've seen bots ask for company revenue in message two and wreck the conversation almost immediately — about 12 seconds from interest to irritation.

If you're building one, don't start with the script. Start with your qualification criteria. Build the dialogue around that. If you want to see how that looks in practice, read Real Estate Ai Chatbot That Qualifies Leads.

Funny part? The best lead qualification chatbot often feels less like automation and more like relief. Somebody asked a real buying question, and for once your website didn't make them fill out five boxes before getting an answer.

Why Interrogatory Qualification Loses Leads

Last month I watched a SaaS chatbot blow a perfectly good lead in under 20 seconds. The visitor asked a plain question — “Does this integrate with HubSpot?” — and got hit with the usual bureaucratic nonsense: budget, company size, purchase timeline. Not an answer. Not even a useful hint. Just intake.

People notice that stuff fast. Five seconds, maybe less. A bot with typing dots and friendly phrasing doesn't magically become a conversation if it's still doing the job of a bad form.

Salesforce has pushed the operational logic for years: collect needs, budget, and timing, pass it into CRM and marketing systems, keep lead management clean. Fine. I don't have a problem with the plumbing. The problem starts after teams hear that advice and build a lead qualification chatbot that extracts before it helps.

That's where conversational lead qualification breaks. The buyer does all the work up front. Your side gives almost nothing back. I've seen bots on SaaS sites ask four gating questions before offering a one-sentence integration answer. Four questions for something a help doc could've handled in eight words. People leave. I'd leave too.

The real miss is intent.

Too many teams treat conversational AI, natural language processing (NLP), and intent detection like field-harvesting tools. Faster extraction. More records. Cleaner CRM entries. I think that's backwards. Those systems should figure out what the person is trying to do, then decide what comes next: answer, clarify, qualify, or route.

If someone's showing buying intent, great. Start gathering relevant qualification criteria. If they're still asking whether your product even fits their stack, interrogation kills momentum before it has a chance to build.

This isn't some edge-case mess either. Codertrove reported that more than 60% of companies using chatbots now use them specifically for lead qualification. That's a lot of businesses automating the wrong part first.

- Drop-off rises. Ask too much too early and people bounce before anything useful happens.

- Trust drops. The exchange feels one-sided, like your system cares more about data capture than helping.

- Meeting-booking rates slip. Prospects who might've booked after one decent answer never get there.

A better AI sales chatbot earns the right to ask. Give one useful answer first. Ask one relevant question next. That's conversational discovery. Value traded for information. Not information demanded on credit.

Here's the practical version.

Someone asks about HubSpot? Answer the HubSpot question first. Then ask what they're trying to sync — contacts, deal stages, attribution, whatever matters in their setup. If budget is actually the blocker, ask about budget. If it isn't, don't drag it in just because some workflow template says you should.

Lead scoring and lead scoring automation can do their job quietly in the background once you've got enough signal to work with. The user doesn't need to feel every internal process your RevOps team built.

If you're comparing rigid bot setups with custom flows, this is where the difference gets painfully obvious: chatbot development services vs platforms.

The part people miss? Asking fewer chatbot qualification questions often gets better answers. Around question two, people still feel like they're talking. Around question five, they feel processed.

Natural Lead Qualification Methodology

Everybody says the same thing: make the bot fast, keep the chat short, qualify the lead in two or three questions, hand it to sales, done. That story sounds clean. It also leaves out the part where real buyers act like real people and bail the second a bot feels pushy.

55%. That’s what Codertrove reported in 2026: more than half of companies said chatbots were bringing in better leads. Sure. I believe it. I’ve also seen those same companies wreck the handoff because the bot treated qualification like airport security. Too early. Too stiff. Too many “What’s your budget?” questions before anyone had even explained what they needed.

Jotform Blog has been pointing at the same trend for a while now: more teams are using bots to qualify leads so sales can spend time on actual opportunities instead of dead ends. Fine. The old thinking is that speed is the whole win. I’d argue that’s outdated. People will answer six or seven prompts without much friction if each one makes sense. I watched a SaaS buyer do exactly that last fall during a test flow tied to HubSpot. Same week, another prospect disappeared after the second message because the bot jumped straight into budget and implementation timing like it was trying to hit quota before lunch.

The missing piece is context. Not slick copy. Not fewer questions just for bragging rights. Context first, then qualification.

1. Open easy

A good first question doesn’t corner anybody. It gives you intent detection data without making the user feel screened by a machine with trust issues.

“What are you trying to solve?” works. “Are you comparing options or planning implementation?” works too. Those are light touches, but they tell you a lot.

A decent AI sales chatbot using natural language processing (NLP) can sort replies into useful groups like exploration, evaluation, or purchase intent. That matters more than people admit, because hard qualification too early is how a bot turns cold and weird fast.

2. Don’t interrogate yet

This is where most chatbot qualification questions go bad. Someone answers one thing and the bot immediately starts grabbing fields: timeline, budget, team size, rollout date. Basically a form pretending to be a conversation.

A better move is almost boring, which is why it works. Reflect first.

“Got it, you need something that works with HubSpot and fits a small sales team.” That kind of line does two jobs at once: it proves the bot understood them, and it buys permission for the next question.

Then ask one follow-up that actually belongs there. “Is this for one team or across the company?” feels normal. Randomly lunging at headcount doesn’t.

3. Help while you qualify

This part gets missed all the time. If intent is clear, go deeper into conversational discovery. If they’re still figuring things out, give them something useful before asking for more information. Salesforce has been saying this for years, and they’re right: conversational exchanges beat static forms when people get value during the chat instead of after it.

- If someone asks about integrations: answer briefly, then ask what tools they already use.

- If someone asks about pricing: give range logic or pricing context, then ask about team size or use case.

- If someone asks about implementation timing: share the usual rollout window, then ask about their deadline.

The scoring itself should stay in the background. Your conversational AI can handle lead scoring automation quietly as signals appear. Nobody wants to feel like they’re being graded in real time by a chatbot with a clipboard.

If you’re building this yourself, this is usually where generic flows fall apart. Stock templates look fine until buyers stop following the script. Custom logic usually holds up better: AI chatbot virtual assistant development.

So no, shorter chats aren’t automatically better chats. Read intent first. Say back what you heard. Give value before asking for more data. If your bot hasn’t earned the next question, why would anybody answer it?

Conversation Design Patterns That Feel Helpful

Everybody says the same thing about chatbots: qualify faster, capture more leads, keep the pipeline moving. Sure. That's the sales-deck version.

The problem is that advice is incomplete, and sometimes just flat-out wrong in practice. I watched a B2B bot last month turn a simple question — “Do you integrate with Salesforce?” — into a mini interrogation about budget, company size, timeline, and email, complete with that fake-friendly typing indicator pulsing away like it deserved gratitude. It wasn't helping. It was blocking the door.

That's still where teams mess this up. They build a lead qualification chatbot like its job is to control the conversation and pull data out of people as fast as possible. I think the better approach is almost backwards: let the visitor lead, answer what they came for, and quietly gather enough signal for lead scoring automation without making the whole exchange feel like intake paperwork.

Small shift. Huge difference.

A 2026 Codertrove report found that 67% of business leaders say chatbots have increased their sales. I don't read that as proof that every bot is good. Not even close. I read it as proof that the upside is real, and bad conversation design still leaves money on the table every single day.

Codertrove also says chatbots help with lead qualification by engaging people in real time, asking the right questions, and automating the process so sales teams can focus on sales-ready prospects. The missing piece is buried inside that phrase: the right questions. Not every question. Not six in a row. Not before you've answered the thing they actually asked.

Start by helping

If someone wants to know whether your product connects to Salesforce, your AI sales chatbot should just answer it. Directly. Then ask one follow-up that's actually useful, something like, “Are you replacing an existing workflow or setting this up fresh?” That's conversational discovery. You're honoring intent while still collecting meaningful qualification criteria.

The weaker version flips that order and jumps straight into chatbot qualification questions about budget or timeline before trust exists. I've seen sessions die in under 20 seconds that way.

Give people something to click

Open text boxes look great in demos. Real users freeze.

People don't want homework from a bot. A stronger conversational lead qualification flow mixes natural language with clear choices like “Just researching,” “Need pricing,” or “Ready to talk.” With solid intent detection and natural language processing (NLP), free-text replies still work fine. The options just cut friction fast because they reduce decision effort without making anyone feel cornered.

That's why custom dialogue work usually beats flashy prototype theater. The lift comes from how each turn is structured, not how polished the demo looks: AI chatbot virtual assistant development.

Repeat back what you think you heard

Bots have this bad habit of acting confident when they shouldn't.

If your system doesn't confirm meaning, your data gets messy fast. A line like, “Sounds like you're evaluating for a 20-person sales team with a Q3 deadline, right?” pulls off three jobs at once: it improves accuracy, sharpens lead scoring, and makes the interaction feel cooperative instead of extractive.

Good conversational AI doesn't just collect responses. It shows it understood them.

Know when to stop

The smartest thing a bot can do sometimes is step aside.

If buying intent is high, confusion is rising, or somebody starts asking edge-case questions, offer a clean handoff to a human: “I can bring in sales now if you'd rather talk specifics.” That's not failure. That's good UX. Honestly, one of the most human things a bot can do is get out of the way at exactly the right moment.

If your flow keeps pushing instead of helping, asking instead of answering, and persisting instead of handing off, what do you think users are feeling?

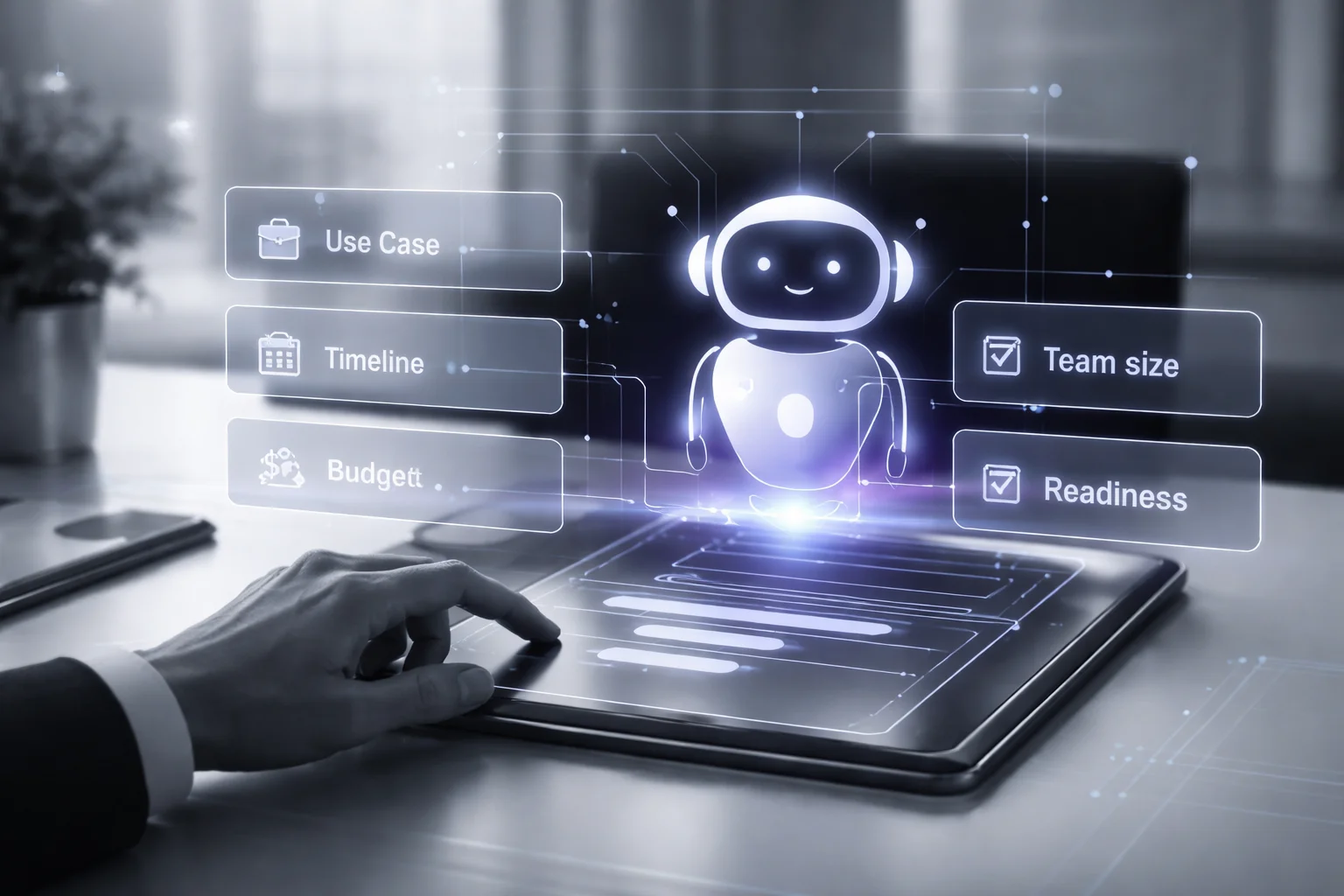

What High-Converting Lead Qualification Chatbots Ask

Why do so many chatbots still open like a bored front-desk temp with a clipboard?

I saw it again last summer on a SaaS site. A buyer landed there trying to fix lead routing, clicked into chat, and got hit with the usual lineup: name, work email, company, budget, timeline. Five questions. Gone. Session over in maybe 40 seconds. I've watched enough of these to know the pattern by heart.

People don't show up wanting to complete your CRM hygiene project. They show up because something's broken, expensive, slow, embarrassing, or all four at once. Yet a lot of teams still treat the first interaction like intake paperwork.

Salesforce has been making the case for years that conversational flows beat static forms because they hold attention while collecting contact details and preferences. Sure. That's true. But plenty of companies took that advice and did the laziest possible thing with it: they shoved the same old form into an AI sales chatbot and added typing dots, as if animation alone makes friction feel friendly.

The answer is simple, but it's not comfortable: a good lead qualification chatbot asks in buying order, not CRM order. I think that's where most teams get this wrong. The bot should start with conversational discovery, because identity isn't the most useful signal at the beginning. Intent is.

Start with the problem, not the person

If someone says, “We need help routing inbound demos,” you've already learned something you can act on. Same with support deflection. Same with outbound qualification. Same with the messier real-world versions people actually type, like “our reps are drowning” or “we need faster follow-up after webinar signups.” That's where conversational AI, natural language processing (NLP), and intent detection actually earn their keep: turning loose phrasing into usable qualification criteria.

A better opener sounds like this: “What are you looking to improve?” Or: “Is this for lead routing, support deflection, or outbound qualification?” Not glamorous. Effective.

Ask about urgency early

Timeline shouldn't sit at the end like a housekeeping item. It changes everything. Someone researching for next quarter needs a different path than someone who has to launch this month because their VP dropped a deadline on Friday at 4:37 p.m. and now everyone's pretending it's reasonable.

Scope tells you more than company size usually does

This gets skipped way too often. Ask whether the project is for one rep, one department, or a 200-person revenue team. That's usually a better read on complexity than some generic employee-count field ever gives you.

Don't ask for budget head-on if you can help it

“What's your budget?” kills momentum fast. People get guarded. They hedge. They disappear. Ask sideways instead: “Are you comparing options mostly on fit, speed, or price?” You still learn how budget-sensitive they are, but now you've got context instead of a defensive answer.

Readiness comes last because that's when it becomes useful

This is where you get practical: what tools are already in place, who owns implementation internally, whether any approval process exists yet. Those details make lead scoring automation far sharper during conversational lead qualification. Before that point, they're just more boxes.

Salesmate says a lead qualification bot can handle about 80% of routine questions. Fine. Let it earn that number by doing useful work first. Then bring in targeted chatbot qualification questions. If you want to see how these flows shift by use case, here's one example: Real Estate Ai Chatbot That Qualifies Leads.

So if your bot still starts by asking for contact info before it understands the job somebody needs done, what is it actually qualifying?

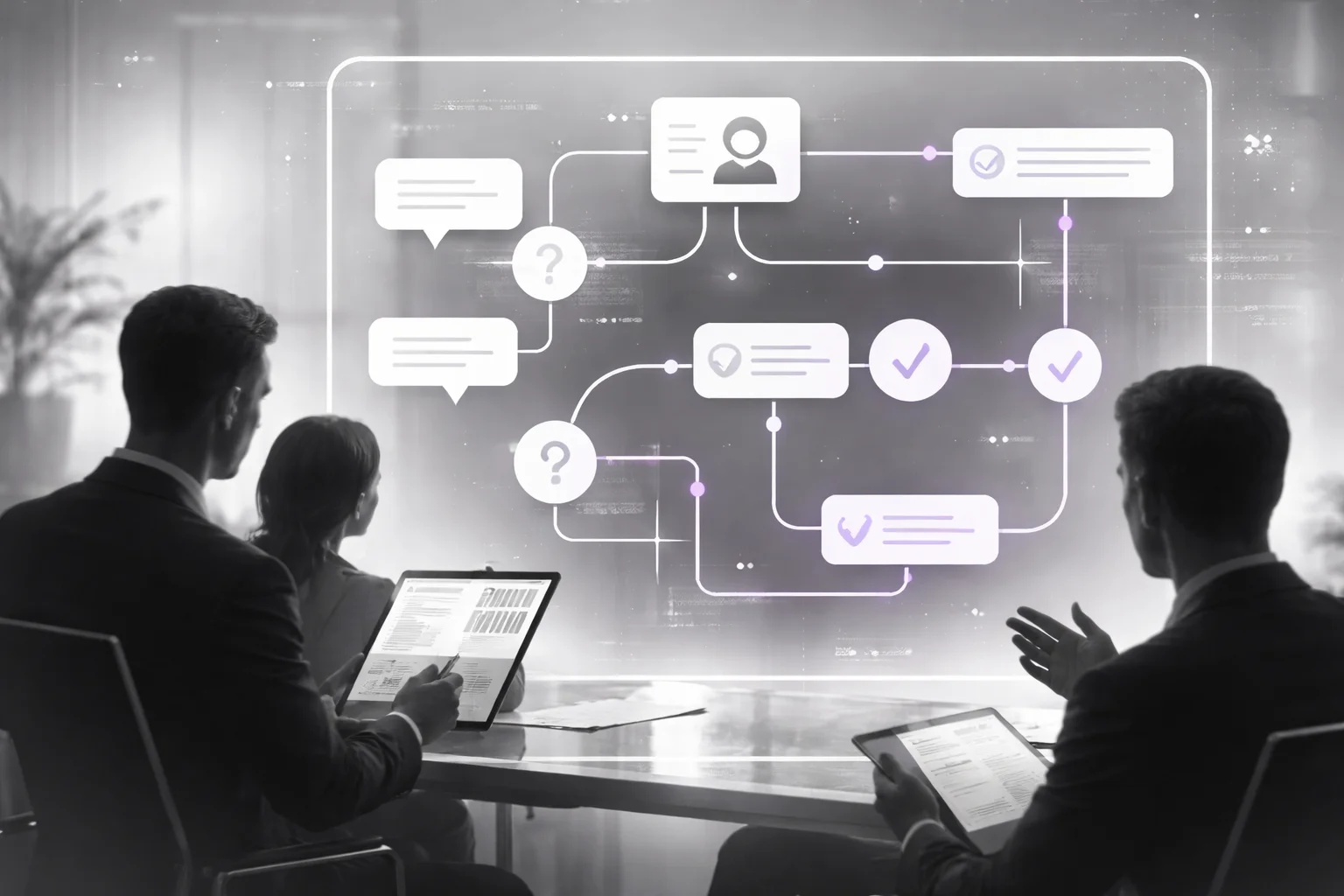

How to Build a Conversational Lead Qualification Bot

Everybody says the same thing first: the magic is in the model. Buy the smarter engine. Rewrite the prompt. Add a shinier AI sales chatbot. That's the part vendors demo because it looks good in 90 seconds, unlike a HubSpot or Salesforce sync quietly failing from Tuesday to Monday while sales keeps wondering why inbound volume "feels weird."

That story's incomplete. I'd argue it's mostly wrong.

Teams rarely lose because the bot isn't clever enough. They lose because the wiring underneath is sloppy: messy intent detection, branching logic that only works if buyers answer like they're taking a survey, and CRM handoffs that crack the second someone asks two things in one sentence.

A real example makes this obvious fast. Someone types, "Can this work with Salesforce and what does setup look like for a 40-person team?" That's not idle browsing. That's integration interest plus implementation scope plus team size, all in one line. I've seen bots treat that as generic top-of-funnel curiosity, fire back a help-center article, and never create a proper sales flag. Then everybody blames AI. Come on. That's bad intent design wearing an AI costume.

The number people should pay attention to isn't about superhuman conversation anyway. A 2026 Jotform Blog report said 74% of customers prefer chatbots for simple, quick questions. Simple. Quick. Not a 20-message fake friendship before you ask about budget, timeline, or team size.

That's the piece people miss: speed has a boundary. Before that boundary, your lead qualification chatbot should answer easy asks immediately. After it, once buying signals show up, your conversational lead qualification flow needs to switch modes and start actual discovery instead of pretending every visitor wants the same canned path.

I wouldn't build this by starting with prompts. I'd start somewhere less glamorous and way more useful.

Define intents first. Then map qualification criteria. After that, write branches for ambiguity, strong intent, disqualification, and human escalation. Log every answer. Update lead scoring as the conversation changes, not just at the end. Push clean records into CRM with source, transcript, and next-step status attached. If that data doesn't land correctly, your reps spend Friday afternoon fixing junk entries by hand instead of calling good leads.

For CTOs, production readiness isn't optimism and it definitely isn't "the model will figure it out." It's guardrails. Set fallback thresholds. Watch drop-off by node. Track completion rate, routing accuracy, and which chatbot qualification questions keep producing garbage data or dead-end exits. Test weekly. Tune prompts monthly. If you're weighing custom work against off-the-shelf tools, read chatbot development services vs platforms.

People love bots that keep talking because long conversations look impressive in screenshots. I think that's vanity dressed up as product strategy. The best bot answers fast, qualifies cleanly, routes at the right moment, and then gets out of the way — does yours?

Where this leaves us

A lead qualification chatbot works best when it feels like a useful conversation, not a dressed-up form, because better conversational discovery leads to better routing, cleaner lead scoring, and more sales-ready meetings.

So start with your qualification criteria, then map the actual questions buyers can answer naturally. Audit your CRM integration, handoff to sales, and lead scoring automation before you obsess over copy, because broken routing ruins good intent detection fast.

And watch the experience closely. If your AI sales chatbot asks chatbot qualification questions in your internal field order instead of the buyer’s decision order, people will bail, even if the natural language processing (NLP) looks smart on paper.

Most people get this wrong by treating conversational lead qualification like data capture with typing bubbles. The better way to think about it is simple: build a conversation that earns the next answer, then let the system do the sorting.

FAQ: Lead Qualification Chatbot

What is a lead qualification chatbot?

A lead qualification chatbot is a conversational AI tool that talks with website visitors, asks qualification questions, and decides who should go to sales, nurture, or support. Instead of dumping people into static data capture forms, it uses conversational discovery to collect details like need, timeline, company size, and budget. The goal is simple: give your team cleaner pipeline and faster handoff to sales.

How does a lead qualification chatbot work?

A lead qualification chatbot starts a conversation, uses intent detection and natural language processing (NLP) to understand replies, and asks follow-up questions based on qualification criteria. It can score answers in real time, apply lead scoring automation, and route the lead to a rep, meeting scheduler, or nurture flow. According to Salesforce, these bots can also sync captured data with CRM integration and marketing automation systems.

What questions should a lead qualification chatbot ask?

The best chatbot qualification questions focus on the few things that actually predict sales readiness: problem, company size, timeline, budget range, and decision-making role. Wonderchat notes that lead qualification bots commonly ask about budget, company size, timeline, and specific needs before scoring the lead. Keep it tight, because every extra question adds friction.

How do you make conversational lead qualification feel helpful instead of annoying?

Start with context, not interrogation. A good conversational lead qualification flow explains why it's asking, uses plain language, and adapts based on previous answers so the exchange feels like help, not a form with a fake smile. Honestly, bad conversational UX usually comes from stuffing every sales question into the first chat.

Can a lead qualification chatbot integrate with a CRM?

Yes, and if it can't, don't buy it. A useful AI sales chatbot should map responses into CRM fields like company size, use case, timeline, source, and lead score so your team doesn't retype anything later. That's what makes qualification routing, reporting, and follow-up actually work.

How do you choose between BANT, CHAMP, or a custom qualification framework?

Pick the framework that matches how your buyers actually buy. BANT works when budget and timing are clear early, CHAMP is better when pain and priorities matter more than procurement details, and a custom model usually wins if your sales cycle is messy or multi-stakeholder. Look, most teams don't need a perfect framework, they need one the chatbot can apply consistently.

How should a lead qualification chatbot handle vague answers or missing information?

It should clarify once, offer structured choices, and move on if the person still doesn't know. For example, if someone says their timeline is “soon,” the bot can ask whether that means this month, this quarter, or later. Good dialogue design patterns reduce drop-off because they don't trap people in endless back-and-forth.

How do you measure whether a lead qualification chatbot is working?

Track speed to lead, qualified meeting rate, completion rate, sales acceptance rate, and downstream revenue, not just chat volume. According to Codertrove, 55% of companies report that chatbots help generate more high-quality leads, and 67% of business leaders say chatbots have increased sales. Those numbers matter only if your own lead scoring, handoff to sales, and conversion data back them up.